While doing some DNS modifications (for this site, in fact) on AIT Domains, I received the following error message when trying to update the nameservers:

+

The site’s programmers may know what that means, but I certainly don’t. That’s not the kind of error message that should be public-facing. These guys really need to read Defensive Design for the Web.

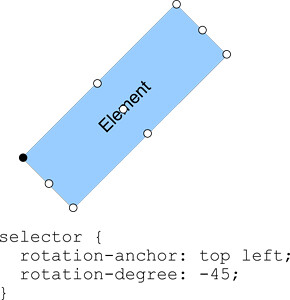

diff --git a/export/2005-05-27-trash-dom-treasure.md b/export/2005-05-27-trash-dom-treasure.md new file mode 100644 index 0000000..0ab4b1b --- /dev/null +++ b/export/2005-05-27-trash-dom-treasure.md @@ -0,0 +1,21 @@ +--- +title: "Trash + DOM = Treasure?" +date: 2005-05-27 02:42:47 +comments: true +tags: + - "(x)HTML" + - "CSS" + - "design" + - "JavaScript" +description: "I was browsing the popular links on del.icio.us today and stumbled onto Nifty Corners and (via that page) More Nifty Corners . I have to say that I am incredibly impressed with the scripting, but I fear there is something wrong with..." +permalink: /archives/trash-dom-treasure/ +--- + +I was browsing the popular links on del.icio.us today and stumbled onto Nifty Corners and (via that page) More Nifty Corners. I have to say that I am incredibly impressed with the scripting, but I fear there is something wrong with this picture.

+Lately, there have been some border wars over the CSS :hover pseudo-class and its forays into the behavior layer. Sure, it’s easier to have CSS do the work sometimes, but that doesn’t make it right. Frankly, I agree with the concept that behavior should be separated from presentation, just as presentation should be separated from content (which is why I use JavaScript to open and close the faux-<select> in my <select> Something New series).

I am also a big believer in clean, semantic markup, so I become concerned when anyone is adding superfluous code to the document to force a design issue. I know some might say I live in a glass house, but when I see someone putting code like this

+| <div id="container"> | |

| <b class="rtop"> | |

| <b class="r1"></b> <b class="r2"></b> | |

| <b class="r3"></b> <b class="r4"></b> | |

| </b> | |

| <!–content goes here –> | |

| <b class="rbottom"> | |

| <b class="r4"></b> <b class="r3"></b> | |

| <b class="r2"></b> <b class="r1"></b> | |

| </b> | |

| </div> |

into their document (even if it is via the DOM), I begin to shudder. Maybe it’s the nagging purist in me, but that just seems wrong.

+Are we falling into the old patterns again, forcing design issues through hacky markup? Does the use of non-semantic markup (taking a page from Eric, no doubt) make it OK? Does the fact that it’s inserted via the DOM make it any more valid? Where do we draw the line?

+I don’t have the answer, but I think we need to have the conversation.

+ diff --git a/export/2005-05-28-i-wanna-be-a-big-player.md b/export/2005-05-28-i-wanna-be-a-big-player.md new file mode 100644 index 0000000..af3221b --- /dev/null +++ b/export/2005-05-28-i-wanna-be-a-big-player.md @@ -0,0 +1,12 @@ +--- +title: "I wanna be a big player" +date: 2005-05-28 23:04:03 +comments: false +tags: + - "business" +description: "I opened the latest issue of Baseline to find a giant 2-page spread for 1&1 (a hosting company), touting their “ Dynamic Content Catalog ” and it’s ability to give you “website content like the big players.” Basically, they are offering..." +permalink: /archives/i-wanna-be-a-big-player/ +--- + +I opened the latest issue of Baseline to find a giant 2-page spread for 1&1 (a hosting company), touting their “Dynamic Content Catalog” and it’s ability to give you “website content like the big players.” Basically, they are offering to syndicate content (news, sports, games, etc.) onto your site, so you no longer have to worry about keeping your site fresh or interesting.

+I feel like this is an attempt to reintroduce the idea that every site needs to be a portal (why that concept is still floating about I’ll never know). I also see this as as flying in the face of one of the most important business objectives: establishing a brand, voice, etc. through copywriting. If all you have to offer your clients is data with no distillation, why bother?

diff --git a/export/2005-06-06-why-intel.md b/export/2005-06-06-why-intel.md new file mode 100644 index 0000000..5ca491f --- /dev/null +++ b/export/2005-06-06-why-intel.md @@ -0,0 +1,23 @@ +--- +title: "Why Intel?" +date: 2005-06-06 21:19:40 +comments: false +tags: + - "business" + - "humor" +description: "I’ve been doing a little research lately into new laptops and I am finally starting to understand a little more about processors, etc. , so I am dumbfounded to hear that Apple is dumping IBM ’s PowerPC chips for Intel’s Pentium line..." +permalink: /archives/why-intel/ +--- + +I’ve been doing a little research lately into new laptops and I am finally starting to understand a little more about processors, etc., so I am dumbfounded to hear that Apple is dumping IBM’s PowerPC chips for Intel’s Pentium line. From my experience, Intel chips a) run really hot and b) suffer from a severe processing bottleneck (3.2 Gigahertz with a 533 Megahertz Front-Side Bus? WTF?). It seems to me that it would have made more sense for Apple to go with AMD, they’ve got incredibly powerful chips which I understand do not suffer from these problems. Maybe there’s something I’m missing, after all, I’m not a chip guy (or a Hollywood mogul).

+Enough about the switch, I wanted to share some humor. I love this exchange on Slashdot in reaction to the news:

+++Dispel any remaining doubts; we are now living in the evil mirror universe.

+

++I’ll believe that when the Red Sox win the World Series!

+

++Yeah, right — that’s about as likely as finding out who Deep Throat is.

+

You can read the whole trail if you like.

diff --git a/export/2005-06-20-standardizing-nomenclature.md b/export/2005-06-20-standardizing-nomenclature.md new file mode 100644 index 0000000..402526d --- /dev/null +++ b/export/2005-06-20-standardizing-nomenclature.md @@ -0,0 +1,16 @@ +--- +title: "Standardizing Nomenclature" +date: 2005-06-20 14:54:15 +comments: false +tags: + - "(x)HTML" + - "coding" + - "web standards" +description: "I agree with Richard : peoples’ eyes do glaze over when you say “semantic,” but they don’t have to. When Molly & I co-teach or when I am on my own, I always try to strike a balance by alternating “meaningful” and “semantic.” I feel it..." +permalink: /archives/standardizing-nomenclature/ +--- + +I agree with Richard: peoples’ eyes do glaze over when you say “semantic,” but they don’t have to. When Molly & I co-teach or when I am on my own, I always try to strike a balance by alternating “meaningful” and “semantic.” I feel it is important that “semantic” does not go away because it does have value. That said, it is necessary to relate to your audience, no matter what their level or experience, so I think alternating the terms and showing the interchangability of the two is beneficial for everyone.

+It is also very important to stress the difference between “structure” and “semantics.” Way too many people (myself included) have used these terms interchangably, when they are not the same. “Semantics” is about meaning whereas “structure” deals with the framework of your markup. Some say structure has only to do with your XHTML skeleton (DOCTYPE, html, head & body), but I view a page like a house. To me the “structure” is the framing upon which you build your roof, walls and floors. In XHTML, that translates not only to your document skeleton, but also to how you use divs to “frame” your content, how you use heading tags to designate content sections, etc.

Confusion arises in some cases when elements are both. In the case of heading tags, they are semantically meaningful (each tag conveying the relative importance of the heading it wraps in relation to the document and the other headings) and structural (forming the document outline). Additional confusion seeps in when we discuss how structural divs should be identified or classified semantically.

These sorts of nomenclature confusion are things we need to overcome. Our industry is still very new and we are all learning a little more every day. Sharing a common language is very important for effectively communicating (especially in our global community) and is something I think needs to be stressed even more as we move forward. I think this is yet another area where we need to establish standards and, by having discussions like this, we are taking the first steps toward establishing those.

diff --git a/export/2005-07-15-rip-dif.md b/export/2005-07-15-rip-dif.md new file mode 100644 index 0000000..6798f46 --- /dev/null +++ b/export/2005-07-15-rip-dif.md @@ -0,0 +1,13 @@ +--- +title: "RIP DiF" +date: 2005-07-15 03:18:31 +comments: true +tags: + - "business" +description: "Sad news, friends… Design In-Flight is closing shop. This very young, yet stellar PDF -based magazine was off to a fantastic start, but, as Andy put it , changes in his personal and professional life have conspired to make DiF ’s..." +permalink: /archives/rip-dif/ +--- + +Sad news, friends… Design In-Flight is closing shop. This very young, yet stellar PDF-based magazine was off to a fantastic start, but, as Andy put it, changes in his personal and professional life have conspired to make DiF’s continued publication an impossibility.

+Though I am disappointed at losing such a great publication, I understand where Andy’s coming from. Having spent six years of my life devoted to a magazine I started in college, I know how tough it can be. I put the fritz on hiatus in the summer of 2000 when I moved to Connecticut, hoping to restart it again as a solely web-based publication, but time has conspired to keep it only a homepage. Will I ever find the time to get it going again or is it only wishful thinking? I’m not sure. I guess time will tell.

+Andy, best of luck to you in your future endeavors.

diff --git a/export/2005-08-02-halleluiah.md b/export/2005-08-02-halleluiah.md new file mode 100644 index 0000000..d604c21 --- /dev/null +++ b/export/2005-08-02-halleluiah.md @@ -0,0 +1,20 @@ +--- +title: "Halleluiah" +date: 2005-08-02 16:29:14 +comments: false +tags: + - "(x)HTML" + - "browsers" + - "CSS" +description: "I finally got around to reading Chris Wilson’s post about standards support in IE 7 and I have to admit I am more than a little giddy. Working for an ad agency , most clients (and the majority of my coworkers) have not gotten the whole..." +permalink: /archives/halleluiah/ +--- + +I finally got around to reading Chris Wilson’s post about standards support in IE7 and I have to admit I am more than a little giddy. Working for an ad agency, most clients (and the majority of my coworkers) have not gotten the whole Web Standards thing, mostly because they only use IE. I can’t express how much relief I feel that IE7 is going to fall in line with most of the other browsers out there with regard to standards support.

+Improvements of particular note are alpha transparency in PNGs and support for <abbr>s. When it comes to CSS, a whole host of bugs/issues have been fixed (mostly culled from Quirksmode and Position is Everything). I am left with two nagging questions, however:

* html CSS hack still be supported in IE7 or will that be phased out so * html only affects IE6 and below?Overall, I think this is a huge step forward. I have to hand it to Chris, the IE team and WaSP’s Microsoft Corporation Task Force for making this all a reality. A very hearty thank you goes out to all of you, you made my year.

+UPDATE: A recent blog entry on IE Blog has informed us that the * html selector will not be supported by IE7 in “strict” mode.

Just when I had gotten over the loss of Design In-Flight, the magazine relaunches as a web-only publication. From the history/about page:

+++With the reduction in production efforts with this format, and a slightly less rigid publishing schedule, DiF is sure not to disappear again.

+

I certainly hope so. Welcome back!

diff --git a/export/2005-08-26-estate-tax-thoughts.md b/export/2005-08-26-estate-tax-thoughts.md new file mode 100644 index 0000000..1a1c80b --- /dev/null +++ b/export/2005-08-26-estate-tax-thoughts.md @@ -0,0 +1,21 @@ +--- +title: "Estate Tax Thoughts" +date: 2005-08-26 17:53:01 +comments: false +tags: + - "culture & society" +description: "Congress in going back into session after their summer recess and they will be taking a vote on the Estate Tax (or “Death Tax” as some people like to call it). It is a hotly contested issue that I feel very strongly about. The truth is..." +permalink: /archives/estate-tax-thoughts/ +--- + +Congress in going back into session after their summer recess and they will be taking a vote on the Estate Tax (or “Death Tax” as some people like to call it). It is a hotly contested issue that I feel very strongly about. The truth is that a lot of public services depend on the revenue generated by the estate tax and the number of people affected by it is less than 1.4% of the population. I should be so lucky to be wealthy enough for my children to have to pay the Estate Tax.

+I recently wrote to my Senators and Representative to let them know how I feel and I thought I’d share it with you. Maybe you’d like to write to yours.

+++Dear Senators Dodd and Lieberman and Congresswoman DeLauro,

+I am a small business owner and I support preserving the Estate Tax. I owe my life and business to the America the Estate Tax has helped build.

+The Estate Tax provides the needed revenue to create wonderful services and opportunities for many companies. Without the internet (which the Estate Tax helped fund), I would not be able to be the successful Web Designer I am. In fact, my career path would never have been an option. Likewise, I may not have had the education to do my job—“nor my employees, theirs—“had it not been for the public school system, also funded in-part by the Estate Tax. Without a stable mail service, I would not be able to send the invoices and receive the payments my buisiness depends on. Without the infrastructure our public highways and roadways provide, I would not be able to travel to meet with clients and my business would suffer. The same goes for air travel: it would not be as safe or reliable if the Federal Government had not used tax revenues (including the Estate Tax) to make it so.

+If I should become so wealthy that my children would even have to pay the Estate Tax, I do not feel it would be unfair for the U.S. Government to ask for a little back to repay the society that has made my business, job and lifestyle a reality. In order to ensure future generations can acheive the success that I have, we need to keep the Estate Tax.

+Sincerely,

+Aaron Gustafson

+

—–

diff --git a/export/2005-08-28-my-opinion-on-the-alas-redesign.md b/export/2005-08-28-my-opinion-on-the-alas-redesign.md new file mode 100644 index 0000000..9213919 --- /dev/null +++ b/export/2005-08-28-my-opinion-on-the-alas-redesign.md @@ -0,0 +1,12 @@ +--- +title: "My opinion on ALA’s redesign" +date: 2005-08-28 13:14:29 +comments: false +tags: + - "business" + - "design" +description: "Yeah, I’m weighing into the debate on the ALA redesign. I have to say I agree with Jon and Jeremy regarding the fixed 1024 px width. My little 2¢; to add to this discussion is that I think more designers should consider the wonderous..." +permalink: /archives/my-opinion-on-the-alas-redesign/ +--- + +Yeah, I’m weighing into the debate on the ALA redesign. I have to say I agree with Jon and Jeremy regarding the fixed 1024px width. My little 2¢; to add to this discussion is that I think more designers should consider the wonderous world of CSS switching based on browser width (see Rammstein or the slightly better implementation on Drink-drive-lose.com’s Ad Challenge). Using this technique, users can view your site at their most comfortable screen resolution and you can still have a nicely designed page for them (fixed or liquid… or both). Can you say zoom layouts? I knew you could.

diff --git a/export/2005-09-02-death-to-bad-dom-implementations.md b/export/2005-09-02-death-to-bad-dom-implementations.md new file mode 100644 index 0000000..623cfcf --- /dev/null +++ b/export/2005-09-02-death-to-bad-dom-implementations.md @@ -0,0 +1,36 @@ +--- +title: "Death to bad DOM Implementations" +date: 2005-09-02 19:10:52 +comments: true +tags: + - "(x)HTML" + - "coding" + - "JavaScript" + - "web standards" +description: "I just encountered a DOM implementation issue in IE which took about three hours to solve (and like a year off my life). The story goes like this:" +permalink: /archives/death-to-bad-dom-implementations/ +--- + +I just encountered a DOM implementation issue in IE which took about three hours to solve (and like a year off my life). The story goes like this:

+I could not, for the life of me, figure out why a form submitted in Firefox was coming through perfectly while it was missing fields in IE. The form in question has some normal fields and some dynamically generated ones (if JavaScript is enabled). The normal stuff was coming through fine, but I was getting no values for the dynamically generated fields when the form was submitted in IE. I checked the $_REQUEST variable (I am using PHP) to see what was coming through, just to be sure.

I immediately figured it was missing name attributes, but I was using the proper syntax to create the input elements via the DOM (note: the actual JS is more generic than this)

| var inpt = document.createElement('input'); | |

| inpt.setAttribute('name', 'company'); |

Indeed, when I looked at the page through the Web Accessibility Toolbar’s View Generated Source, it was indeed missing the name attribute:

| <INPUT id=company maxLength=255> |

After about another hour or two of fruitless Google-ing, I finally typed in the magic phrase (setting the name attribute in Internet Explorer) and ended up on Bennett McElwee’s blog post of the same name. Suddenly it was all clear and (as I expected) IE’s botched implementation of the DOM’s createElement function was to blame.

According to the MSDN page on the name attribute (linked and quoted in the blog entry):

++The NAME attribute cannot be set at run time on elements dynamically created with the createElement method. To create an element with a name attribute, include the attribute and value when using the createElement method.

+

It continued with the following example:

++++This file contains bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters. Learn more about bidirectional Unicode characters

var oAnchor = document.createElement("<A NAME='AnchorName'></A>");

The script “solution” Bennett posted was somewhat of a red herring, however, as Firefox would actually execute the createElement intended for IE and end up with an element named “<input name=”company” />” which would be rendered on the page as

| <<input name="company" /> id="company" maxlength="255" /> |

Perhaps you can see why this would be problematic.

+I augmented Bennett’s script slightly and renamed the function createElementWithName so I wouldn’t have to use it on every element I created in the script:

| function createElementWithName(type, name) { | |

| var element; | |

| // First try the IE way; if this fails then use the standard way | |

| if (document.all) { | |

| element = | |

| document.createElement('< '+type+' name="'+name+'" />'); | |

| } else { | |

| element = document.createElement(type); | |

| element.setAttribute('name', name); | |

| } | |

| return element; | |

| } |

I am not a super fan of the reference to document.all as it feels so much like browser sniffing. I am up for suggestions to improve the function if you have any ideas.

Anyway, I am posting this to hopefully save someone else from the major headache I had today.

+ diff --git a/export/2005-09-07-adding-more-to-my-plate.md b/export/2005-09-07-adding-more-to-my-plate.md new file mode 100644 index 0000000..10f2cb4 --- /dev/null +++ b/export/2005-09-07-adding-more-to-my-plate.md @@ -0,0 +1,16 @@ +--- +title: "Adding more to my plate" +date: 2005-09-07 11:48:14 +comments: false +tags: + - "business" + - "presentations" + - "projects & products" +description: "It’s funny, but the more I take on, the more zen I get about work. Perhaps it’s the recent addition of a daily trek to the gym in the wee hours of the morning which is getting my day off to a better start. Or maybe it’s the Pragmatic..." +permalink: /archives/adding-more-to-my-plate/ +--- + +It’s funny, but the more I take on, the more zen I get about work. Perhaps it’s the recent addition of a daily trek to the gym in the wee hours of the morning which is getting my day off to a better start. Or maybe it’s the Pragmatic philosophy which is beginning to take hold since finishing The Pragmatic Programmer and starting Agile Web Development with Rails. Who knows, but I am thankful for the calm.

+So what else have I added to my already overfull plate? Well, I recently joined the staff of A List Apart as a copy editor. In fact Ross Howard’s High-Resolution Image Printing (in Issue 202) marks my editorial debut at the famed publication. I am very excited about getting to work with Erin, Jeffrey, Eric, Jason and the rest of the ALA all-stars as I have been an avid reader since I discovered it back in 2000. If you are reading an article and notice an overabundance of <abbr> and <dfn>, there’s a good chance I am to blame.

I am also pleased to confirm that I will be speaking at SXSW Interactive in March of 2006. At present, I am working on one session with Jeremy Keith and two other panels which are still in the formative stages. I will have more details to provide you all in the coming weeks.

+Also to come are some great award announcements, a few more articles, and another potentially big announcement in the web standards arena. In the mean time, I am preparing for a private web standards training session down in North Carolina and next week’s trip to Silicon Valley, where Molly, Andy and I will be putting on a great 3-day training session as part of the Web Design and Project Management Tour from WOW.

diff --git a/export/2005-09-08-those-left-behind.md b/export/2005-09-08-those-left-behind.md new file mode 100644 index 0000000..5f4ba60 --- /dev/null +++ b/export/2005-09-08-those-left-behind.md @@ -0,0 +1,14 @@ +--- +title: "Those left behind" +date: 2005-09-08 00:11:30 +comments: false +tags: + - "culture & society" +description: "In the wake of the tragedy that befel the citizens of and visitors to New Orleans recently, I’ve been amazed at the amount of support and kindness being shown to the survivors ( Barbara Bush’s comments notwithstanding ). Help has come..." +permalink: /archives/those-left-behind/ +--- + +In the wake of the tragedy that befel the citizens of and visitors to New Orleans recently, I’ve been amazed at the amount of support and kindness being shown to the survivors (Barbara Bush’s comments notwithstanding). Help has come from the likely places as well as some unlikely ones and I am sure most of you have already donated money, blood and possibly even a room or two in your house/apartment. While a good deal of assistance is still needed for our fellow humans, there are others in need too: their displaced pets.

+I received an email from a friend in Florida who has agreed to take in two dogs that made it through OK, but there are thousands more animals that need temporary homes, be they in kennels, animal boarding houses, veterinarian’s offices, animal shelters, foster homes or rescue programs.

+From what I understand, there are all breeds of dogs and cats in need of our help. Some are in family groups of 2, 3 and 4 while others are solo. And there are volunteers willing to drive them to you, no matter where you live. The current safe houses for these animals are being inundated and some of these pets will have to be euthanized if they are not moved to make room for the incoming animals.

+If you are interested in taking in a dog or cat (or know someone who is), contact Lynda V. on her cell: 203-515-3024 or at home: 203-227-5308 at any time (day or night).

diff --git a/export/2005-09-20-playing-catch-up.md b/export/2005-09-20-playing-catch-up.md new file mode 100644 index 0000000..2b650b2 --- /dev/null +++ b/export/2005-09-20-playing-catch-up.md @@ -0,0 +1,15 @@ +--- +title: "Playing catch-up" +date: 2005-09-20 13:07:03 +comments: true +tags: + - "books & articles" + - "business" + - "web standards" +description: "I’ve been insanely busy building a new Rails app for a client and travelling a lot for speaking engagements. I just got back from an incredible trip to San Jose (well, Cupertino actually) where Molly , Andy and I were doing some..." +permalink: /archives/playing-catch-up/ +--- + +I’ve been insanely busy building a new Rails app for a client and travelling a lot for speaking engagements. I just got back from an incredible trip to San Jose (well, Cupertino actually) where Molly, Andy and I were doing some training. I had an amazing time with both of them and it was really fun to see Andy in action (I, unfortunately, did not have the peasure of seeing him rock the audience at @media). We had a really great group of conference attendees too. I am a little saddened that this was my last stop on the WOW tour (I am missing Hawaii as it takes place on election day, but more on that later), but I have heard some rumblings that the show may go back on the road for a European leg. Fingers crossed.

+Anyway, we’ve pushed a new issue of ALA out the door which includes a fantastic piece by Eric on the new ALA print stylesheet and I have a new article is in there as well: Improving Link Display for Print. It’s print mania at ALA aparently.

+Anyway, I am apparently going to New Jersey today for work, so I need to get ready. Ta for now.

diff --git a/export/2005-09-23-a-little-random-stuff.md b/export/2005-09-23-a-little-random-stuff.md new file mode 100644 index 0000000..a224d71 --- /dev/null +++ b/export/2005-09-23-a-little-random-stuff.md @@ -0,0 +1,16 @@ +--- +title: "A little random stuff" +date: 2005-09-23 20:47:13 +comments: false +tags: + - "culture & society" +description: "I’ve had a few interesting things come through my inbox of late and I thought I would share them with you:" +permalink: /archives/a-little-random-stuff/ +--- + +I’ve had a few interesting things come through my inbox of late and I thought I would share them with you:

+With all of the travelling I was doing last week, I forgot to mention that some of the sites Dave & I have worked at Cronin and Company on took home WebAwards from the Web Marketing Association. We took home two “Outstanding Website” awards (think silver) for our sites for Middlesex Hospital and the Drink-Drive-Lose Ad Challenge. I was perticularly stoked as I was the designer of both sites and single-handedly built the Ad Challenge site which had a lot of complex application-level code behind it. We also took home a “Standard of Excellence” award (think bronze) for the site we built for Garelick Farms’ Over the Moon Milk product launch. I’m not a big fan of that site’s design (not because it wasn’t mine), but I love the game Dave built.

+I was also a judge of the WebAwards (as I have been for the last few years and, no, I did not judge any sites I was involved with) and I have to say I was very pleased to see more sites moving to web standards. Out of the 15 or so I judged in the initial round, there were at least two or three that were well on their way to being exemplary in the standards world (compared to 0 only two years ago). Like most marketing-related awards, Flash always seems to trump standards, but it seems like developers are starting to cue into the standards trend more and more every year.

+This year I also had the privilege of judging the Best of Show. I was pulling for Project Rebirth but National Geographic ended up taking home the top prize for Inside the Mafia which was also in my top 3 picks.

+All in all, this years WebAwards were pretty good to us (and a step up from the two “Standard of Excellence” awards we pulled last year for Ride4Ever and Bertucci’s Restaurants). I look forward to next year’s competition as well as the one at SXSW, where we hope to go from finalist to winner this year.

diff --git a/export/2005-10-02-new-tutorial-westhost-on-rails.md b/export/2005-10-02-new-tutorial-westhost-on-rails.md new file mode 100644 index 0000000..095fc88 --- /dev/null +++ b/export/2005-10-02-new-tutorial-westhost-on-rails.md @@ -0,0 +1,14 @@ +--- +title: "New tutorial: Westhost on Rails" +date: 2005-10-02 15:40:20 +comments: true +tags: + - "books & articles" + - "Ruby & Rails" + - "servers" +description: "I have been hosting on Westhost for a little over four years now with no major complaints and I also host the majority of my clients there. They offer a lot of options for very little money and are always adding new features to the..." +permalink: /archives/new-tutorial-westhost-on-rails/ +--- + +I have been hosting on Westhost for a little over four years now with no major complaints and I also host the majority of my clients there. They offer a lot of options for very little money and are always adding new features to the accounts. Unfortunately, Ruby on Rails is not one of them… yet.

+As I have begun working a bit more with Rails, I have been looking to get it installed on my server (as well as some of my clients’). One of the major half-truths of Rails evangelism is the ease of install, especially with a host running Apache 1.3. After doing a few rather painful installs myself for some new projects, I finally decided to document the process of installing Ruby on Rails at Westhost for my own knowledge and to help any others who may be trying to do the same. Hopefully, Westhost will soon start to offer Rails installs as part of their hosting packages, but, until then, I offer up this humble tutorial.

diff --git a/export/2005-10-11-jeremy-keith-and-me.md b/export/2005-10-11-jeremy-keith-and-me.md new file mode 100644 index 0000000..42375ac --- /dev/null +++ b/export/2005-10-11-jeremy-keith-and-me.md @@ -0,0 +1,16 @@ +--- +title: "Jeremy Keith and Me" +date: 2005-10-11 16:04:11 +comments: false +tags: + - "books & articles" + - "business" + - "JavaScript" + - "presentations" + - "web standards" +description: "It managed to sneak past me for a few days, but my recent interview with Jeremy Keith has made its way into the latest issue of Digital Web Magazine . In the interview we cover the impetus behind his new book , WaSP ’s DOM Scripting..." +permalink: /archives/jeremy-keith-and-me/ +--- + +It managed to sneak past me for a few days, but my recent interview with Jeremy Keith has made its way into the latest issue of Digital Web Magazine. In the interview we cover the impetus behind his new book, WaSP’s DOM Scripting Task Force, and Jeremy’s future as a rockstar.

+On a somewhat tangential note, if you’re interested in competing at the SXSW Web Awards in March, I recommend getting your entires in soon. If you enter by Friday (October 14th), you only have to pay $10 per category which is too cheap to miss out on. If you attend, you’ll get more of Jeremy & me on “How to Bluff Your Way in DOM Scripting.”

diff --git a/export/2005-10-12-proof-positive-that-editing-is-an-art.md b/export/2005-10-12-proof-positive-that-editing-is-an-art.md new file mode 100644 index 0000000..bc694f7 --- /dev/null +++ b/export/2005-10-12-proof-positive-that-editing-is-an-art.md @@ -0,0 +1,12 @@ +--- +title: "Proof Positive that Editing is an Art" +date: 2005-10-12 16:24:37 +comments: true +tags: + - "culture & society" + - "humor" +description: "Have you ever gone to see a movie that is nothing like the trailer ?" +permalink: /archives/proof-positive-that-editing-is-an-art/ +--- + +Have you ever gone to see a movie that is nothing like the trailer?

diff --git a/export/2005-10-19-need-a-job.md b/export/2005-10-19-need-a-job.md new file mode 100644 index 0000000..4affbc4 --- /dev/null +++ b/export/2005-10-19-need-a-job.md @@ -0,0 +1,13 @@ +--- +title: "Need a job?" +date: 2005-10-19 17:07:27 +comments: false +tags: + - "accessibility" + - "business" + - "web standards" +description: "I’ve been informed of a great position in southern Connecticut for a strong standards designer/developer. The full-time position is at dLife.com , a site whose primary focus is providing information and support to individuals and..." +permalink: /archives/need-a-job/ +--- + +I’ve been informed of a great position in southern Connecticut for a strong standards designer/developer. The full-time position is at dLife.com, a site whose primary focus is providing information and support to individuals and families touched by diabetes. The parent company, LifeMed Media, is forming a formidable web team and are planning some great new material for this 11,000+ page site. There’s lots of content to play with and a heavy focus on standards. If you’ve got mad CSS skills and a penchant for semantic markup, this may be up your alley. As the site’s focus is the diabetic community (and diabetes can affect your vision), accessibility knowledge is also a good skill to bring to the table.

diff --git a/export/2005-10-24-proof-you-can-find-anything-on-ebay.md b/export/2005-10-24-proof-you-can-find-anything-on-ebay.md new file mode 100644 index 0000000..457981b --- /dev/null +++ b/export/2005-10-24-proof-you-can-find-anything-on-ebay.md @@ -0,0 +1,11 @@ +--- +title: "Proof you can find anything on eBay" +date: 2005-10-24 01:37:40 +comments: false +tags: + - "culture & society" + - "humor" +permalink: /archives/proof-you-can-find-anything-on-ebay/ +--- + + diff --git a/export/2005-10-24-san-francisco-never-looked-so-tasty.md b/export/2005-10-24-san-francisco-never-looked-so-tasty.md new file mode 100644 index 0000000..8ae4c8f --- /dev/null +++ b/export/2005-10-24-san-francisco-never-looked-so-tasty.md @@ -0,0 +1,12 @@ +--- +title: "San Francisco never looked so… tasty?" +date: 2005-10-24 12:19:55 +comments: false +tags: + - "culture & society" + - "humor" +description: "We always knew San Francisco was filled with rainbow pride, but it now seems the source was not what I expected . It reminds me of the mashed potato CN Tower scene in Canadian Bacon ." +permalink: /archives/san-francisco-never-looked-so-tasty/ +--- + +We always knew San Francisco was filled with rainbow pride, but it now seems the source was not what I expected. It reminds me of the mashed potato CN Tower scene in Canadian Bacon.

diff --git a/export/2005-10-26-in-2030-google-became-self-aware.md b/export/2005-10-26-in-2030-google-became-self-aware.md new file mode 100644 index 0000000..590e351 --- /dev/null +++ b/export/2005-10-26-in-2030-google-became-self-aware.md @@ -0,0 +1,15 @@ +--- +title: "In 2030 Google became self-aware…" +date: 2005-10-26 20:02:27 +comments: false +tags: + - "business" + - "culture & society" + - "humor" +description: "Some interesting news on the Google front: there’s been sightings of a new Google universe which looks more than a little scary, especially in light of the mockumentary-with-a-stright-face known as EPIC 2014 ." +permalink: /archives/in-2030-google-became-self-aware/ +--- + +Some interesting news on the Google front: there’s been sightings of a new Google universe which looks more than a little scary, especially in light of the mockumentary-with-a-stright-face known as EPIC 2014.

+What are they planning? If its present growth and expansion continues, will Google be subject to anti-trust legislation or will it claim it isn’t because it is simply aggregating content?

+The only thing I know for sure is that Google-rage is going to grow.

diff --git a/export/2005-10-27-debugging-javascript-just-got-a-little-bit-easier.md b/export/2005-10-27-debugging-javascript-just-got-a-little-bit-easier.md new file mode 100644 index 0000000..e1d9d09 --- /dev/null +++ b/export/2005-10-27-debugging-javascript-just-got-a-little-bit-easier.md @@ -0,0 +1,26 @@ +--- +title: "Debugging JavaScript just got a little bit easier" +date: 2005-10-27 01:59:39 +comments: true +tags: + - "business" + - "coding" + - "JavaScript" + - "projects & products" +description: "Like many of you, I’m sure, I hate debugging JavaScript. Really, it’s not the debugging, per se , as much as it’s using alert() to echo stuff out to the screen. It’s stupid and distracting and takes for ever if you’re debugging a lot of..." +permalink: /archives/debugging-javascript-just-got-a-little-bit-easier/ +--- + +Like many of you, I’m sure, I hate debugging JavaScript. Really, it’s not the debugging, per se, as much as it’s using alert() to echo stuff out to the screen. It’s stupid and distracting and takes for ever if you’re debugging a lot of stuff.

For the last few months, I’ve been toying with a few different means of error reporting and echoing out debugging information, but hadn’t been really satisfied with anything I’d come up with. I used to do quite a bit of Flash work back in the day (before Dave came along and put my best efforts to shame) and always loved the Trace window. I liked that you could just echo stuff out to it and it acted as a running tally of pretty much anything you wanted to track: variable values, messages, etc. Two days ago I decided that was what I wanted for JavaScript.

+I toyed with the idea of spawning a popup and tracing the info to that, but I don’t like popups. They are possibly more annoying than alert messages (well… maybe not). I decided to echo the messages out to a div on the page instead. Then feature creep set in. Before I knew it, it was a draggable, scalable window with some nifty features. Never one to be selfish, I thought other people could find a use for it too, so I’ve released it for anyone who wants it: here it is. Use it, play with it and improve on it as you see fit.

The script currently has the following features:

+Special thanks go out to Aaron Boodman, whose DOM Drag was perfect for the dragging and enabled me to hook up a window stretcher pretty easily, Richard Rutter, whose Browser Stickies were also somewhat of an inspiration, and Dave, for helping me debug the scaling code.

+Aside: one nice feature of the script is that, once it was operational, I was able to use it to debug itself… how cool is that?

diff --git a/export/2005-10-30-jstrace-two-days-on.md b/export/2005-10-30-jstrace-two-days-on.md new file mode 100644 index 0000000..8cc27e0 --- /dev/null +++ b/export/2005-10-30-jstrace-two-days-on.md @@ -0,0 +1,18 @@ +--- +title: "jsTrace two days on" +date: 2005-10-30 01:04:04 +comments: true +tags: + - "business" + - "coding" + - "JavaScript" + - "projects & products" +description: "The reception for our latest script release , jsTrace , has been fantastic. From the write-up on the DOM Scripting Task Force blog to all of the emails and comments, it’s been great." +permalink: /archives/jstrace-two-days-on/ +--- + +The reception for our latest script release, jsTrace, has been fantastic. From the write-up on the DOM Scripting Task Force blog to all of the emails and comments, it’s been great.

The past few days have seen many ideas, requests and enhancements sent my way. Some have been rolled into the jsTrace 1.1 release which I made public today. One such enhancement (brought to us by Joe Shelby) I have dubbed “memory,” as it allows the debugging window to remember both its position and size the next time it is opened (via cookies). Further enhancements have been made to the underlying code to streamline development of additional tools for the bottom toolbar and the font size of the bottom toolbar has also been increased (per several requests).

+I hope you all enjoy the improvements. Keep ‘em coming.

+Update: We’ve also been mentioned in DOMScripting.com.

+Another update (to 1.2): I added a buffer to handle traces executed prior to the jsTrace window being generated. The buffer is written to the viewport once it is generated.

In his most recent essay, Gerry McGovern was discussing expert opinions and voices. One particular comment he made struck a chord:

+++The Web is maturing. It needs more people like Jakob Nielsen who propose, explain and defend rules.

+

Now you can say what you will about Jakob, but I think the sentiment is right. I also think that priase needs to be spread a little farther to include Molly, Eric, Jeffrey and the countless other standards evangelists (both internationally renown and sitting in the cube next to you) who feel it is their calling to enforce the “rules” of the web. These are people who truly believe, as I do, that constraints are necessary for creativity. Jason Fried mentioned something similar in his discussion of Basecamp (and I am probably paraphrasing):

+++Limited time, limited people, limited funding… they make you creative

+

I think the same could be said for embracing web standards. I mean look at the Zen Garden, the Web Standards Awards, etc. There is some amazingly creative work out there that embraces the “restrictions” of web standards. Frankly, I think that web standards are the main reason DOM scripting (and all that comes with it) has been able to flourish: standards ensure a solid platform upon which to build anything. Their constraints free you to get creative and really make something new.

+So let’s hear it for them: a round of applause for all of the standards evangelists out there. Keep up the great work, we appreciate all that you do.

diff --git a/export/2005-11-02-more-developments-in-jstrace.md b/export/2005-11-02-more-developments-in-jstrace.md new file mode 100644 index 0000000..7a75967 --- /dev/null +++ b/export/2005-11-02-more-developments-in-jstrace.md @@ -0,0 +1,15 @@ +--- +title: "More developments in jsTrace" +date: 2005-11-02 04:31:24 +comments: true +tags: + - "business" + - "coding" + - "JavaScript" + - "projects & products" +description: "As I mentioned to Ian earlier today, Dave and I were discussing having the jsTrace window keep pace with whatever the most current line is spit out to it. A few hours later, here it is: jsTrace 1.3 . I have some other stuff (read..." +permalink: /archives/more-developments-in-jstrace/ +--- + +As I mentioned to Ian earlier today, Dave and I were discussing having the jsTrace window keep pace with whatever the most current line is spit out to it. A few hours later, here it is: jsTrace 1.3. I have some other stuff (read: paying projects) that need my attention, so I am putting jsTrace down for a bit. Dave & I will be posting a few more demos of its use in different situations, but as far as further development goes, I’m gonna be hands-off for a bit to let you all get a chance to participate.

+And if you’re in the participatory mood, check out this site I built with Adaptive Path. I will be posting some details about the project and how I accomplished certain design features once Kel’s campaign’s over and life gets a little less hectic.

diff --git a/export/2005-11-03-disneystorecouk-in-retrograde.md b/export/2005-11-03-disneystorecouk-in-retrograde.md new file mode 100644 index 0000000..3b72823 --- /dev/null +++ b/export/2005-11-03-disneystorecouk-in-retrograde.md @@ -0,0 +1,15 @@ +--- +title: "DisneyStore.co.uk in retrograde" +date: 2005-11-03 11:08:29 +comments: false +tags: + - "business" + - "web standards" +description: "Andy’s beautiful standards-based design is gone, replaced by a table-based pile of (ahem) tag soup. Being the gentleman that he is, Andy has chosen to remain silent on the issue , but he has provided a forum for anyone else who wants to..." +permalink: /archives/disneystorecouk-in-retrograde/ +--- + +Andy’s beautiful standards-based design is gone, replaced by a table-based pile of (ahem) tag soup. Being the gentleman that he is, Andy has chosen to remain silent on the issue, but he has provided a forum for anyone else who wants to chime in. Molly posted an open letter to Disney which I think sums the whole thing up quite well:

++diff --git a/export/2005-11-03-oracle-opens-up.md b/export/2005-11-03-oracle-opens-up.md new file mode 100644 index 0000000..a76fdf6 --- /dev/null +++ b/export/2005-11-03-oracle-opens-up.md @@ -0,0 +1,22 @@ +--- +title: "Oracle opens up" +date: 2005-11-03 15:25:17 +comments: false +tags: + - "business" + - "databases" +description: "In hopes of stemming the massive explosion of open source database use, Oracle is preparing an “express” version of it’s Oracle Database 10g line: Oracle Database XE . Like many things on the web right now, it’s currently in beta, with..." +permalink: /archives/oracle-opens-up/ +--- + +Shame on you Disney.

+

In hopes of stemming the massive explosion of open source database use, Oracle is preparing an “express” version of it’s Oracle Database 10g line: Oracle Database XE. Like many things on the web right now, it’s currently in beta, with a full release planned for late this year.

+Courting the open source set is an interesting move for Oracle. PHP developers are the obvious target right now, but I wouldn’t be surprised to see some of the focus shifted to Rails developers in the near future.

+Here’s a breakdown of some of the features/limitations:

+I haven’t downloaded it to play yet, but there seems to be some fairly detailed instructions on both install and integration on the PHP end.

diff --git a/export/2005-11-05-what-are-they-thinking.md b/export/2005-11-05-what-are-they-thinking.md new file mode 100644 index 0000000..7118a1c --- /dev/null +++ b/export/2005-11-05-what-are-they-thinking.md @@ -0,0 +1,12 @@ +--- +title: "What are they thinking?" +date: 2005-11-05 21:12:02 +comments: false +tags: + - "business" + - "culture & society" +description: "I knew things had taken a turn for the worse when DMCA passed and media owners were discussing their desire to spy on consumers’ computers in search of illicit media, but who knew it had gotten this bad ?" +permalink: /archives/what-are-they-thinking/ +--- + +I knew things had taken a turn for the worse when DMCA passed and media owners were discussing their desire to spy on consumers’ computers in search of illicit media, but who knew it had gotten this bad?

diff --git a/export/2005-11-07-wait-for-it.md b/export/2005-11-07-wait-for-it.md new file mode 100644 index 0000000..e670e95 --- /dev/null +++ b/export/2005-11-07-wait-for-it.md @@ -0,0 +1,12 @@ +--- +title: "Wait for it…" +date: 2005-11-07 10:58:21 +comments: false +tags: + - "humor" +description: "A fantastic new t-shirt from J! NX ." +permalink: /archives/wait-for-it/ +--- + + +A fantastic new t-shirt from J!NX.

diff --git a/export/2005-11-11-e-voting-comes-to-ct.md b/export/2005-11-11-e-voting-comes-to-ct.md new file mode 100644 index 0000000..992b353 --- /dev/null +++ b/export/2005-11-11-e-voting-comes-to-ct.md @@ -0,0 +1,21 @@ +--- +title: "e-Voting comes to CT" +date: 2005-11-11 15:22:26 +comments: false +tags: + - "accessibility" + - "culture & society" + - "usability" +description: "Here in Connecticut, the Secretary of State is getting ready to purchase new computerized voting machines and is doing an exhibition of the different options throughout the state’s five Congressional Districts. As computerized voting is..." +permalink: /archives/e-voting-comes-to-ct/ +--- + +Here in Connecticut, the Secretary of State is getting ready to purchase new computerized voting machines and is doing an exhibition of the different options throughout the state’s five Congressional Districts. As computerized voting is such a hot topic right now, I highly recommend anyone and everyone who lives in my state go to one of the exhibitions and offer some sort of public comment. We need to ensure we get safe voting machines that actually record what we intend them to.

+Here are the dates:

+All this week, the Secretary of State’s office is offering demonstrations of and soliciting public comment on the three finalists for Connecticut’s electronic voting machines. The line was long at Monday’s demonstration at Buckland Hills Mall in Manchester (easily an hour wait), but well worth it to see what we’re in for when we return to the polls next year.

+The following is a breakdown of the three machines being demonstrated and the pros and cons of each.

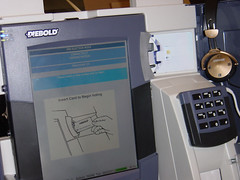

+ This voting machine looks like an ATM, which is not surprising given Diebold’s involvement in that market. According to the literature, to use the machine, you take the plastic card—“which looks like a basic ATM or credit card—“given to you by a poll worker, and insert it into the machine. You then use the touch screen to make your choices for each race. When you are nearing completion, you are shown a summary screen which you review and then choose to cast if it looks good. You can touch individual races or “Review Ballot—? to make changes. I have no idea how legible the text on the screen is or how easy to operate this machine is as when I was observing it, they were only showing the “Insert your card—? screen.

This voting machine looks like an ATM, which is not surprising given Diebold’s involvement in that market. According to the literature, to use the machine, you take the plastic card—“which looks like a basic ATM or credit card—“given to you by a poll worker, and insert it into the machine. You then use the touch screen to make your choices for each race. When you are nearing completion, you are shown a summary screen which you review and then choose to cast if it looks good. You can touch individual races or “Review Ballot—? to make changes. I have no idea how legible the text on the screen is or how easy to operate this machine is as when I was observing it, they were only showing the “Insert your card—? screen.

I also did not get to see the voter verified paper trail add-on in action, but it consisted of a little slot with a magnifying plastic door over it in the lower right-hand corner of the machine, where one would assume the paper “receipt—? drops. The plastic flap was open when I was viewing the machine, so I am uncertain as to whether the slip is removed by a person or not.

+This machine does offer a set of headphones for someone with disabilities and a numeric keypad which I imagine could be used for making selections, but I was amazed to find that the keypad did not have any braille markings on it to indicate the number. I realize not all blind or visually-impaired people can read braille, but something as basic as the numbers zero through nine can be easily picked up and would greatly improve the usability of this device.

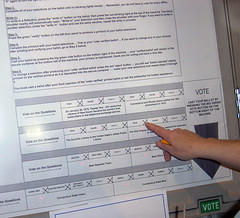

+ This electronic voting machine is not exactly what I imagine when think electronic voting system. It is electronic, so I suppose it qualifies, but it feels more like playing a game of Battleship or Operation than a casting a vote during an election.

This electronic voting machine is not exactly what I imagine when think electronic voting system. It is electronic, so I suppose it qualifies, but it feels more like playing a game of Battleship or Operation than a casting a vote during an election.

The machine consists of a giant light-up board covered by a large sheet of paper with all of the offices and candidates on it. Each race has a blinking red light associated with it. The voter’s job is to extinguish each red light by pressing on the numbered box corresponding to the candidate they choose. A voter can change his or her mind by clicking the box corresponding to the previously chosen candidate to de-select him or her and then make a new choice. When voting is complete, the voter pushes the large, green “VOTE—? button at the bottom which casts the voter’s virtual ballot.

+Personally, I found the “interface—? a little awkward to use as seeing the red lights through the paper was not necessarily easy. I also wonder how easy it would be for someone with macular degeneration or other visual impairment, short of blindness. As for the blind, it appears that they are out of luck with this machine. I couldn’t find a single accessibility feature apart from it being able to be used by someone in a wheelchair (and I question whether the text at the very top of the paper, giving instructions on the machine’s use are actually readable from a height of 3-4 feet). I also failed to see a paper trail on this machine, but perhaps I just missed it.

+ This was the most promising of the three voting machines exhibited, but it too had its drawbacks. The interface was a touch screen, but the arrangement of the races and questions demonstrated was anything but intuitive (obviously a byproduct of developers “designing—?). One of the better features of this machine was the ability to “zoom in—? on a question or race so that it was essentially all you saw on the screen. The write-in process was a little clunky, requiring the use of a keyboard with no real place to store it for easy access. And who wants to be bothered with juggling a keyboard while trying to vote?

This was the most promising of the three voting machines exhibited, but it too had its drawbacks. The interface was a touch screen, but the arrangement of the races and questions demonstrated was anything but intuitive (obviously a byproduct of developers “designing—?). One of the better features of this machine was the ability to “zoom in—? on a question or race so that it was essentially all you saw on the screen. The write-in process was a little clunky, requiring the use of a keyboard with no real place to store it for easy access. And who wants to be bothered with juggling a keyboard while trying to vote?

The most interesting feature of this machine was its paper trail. Each vote cast has a unique serial number (which is untraceable to an individual voter, of course), and when you are almost ready to cast your ballot, a paper tally of your votes is displayed in the box next to the machine for you to look at. If it is correct, you cast your votes via the interface. If it is incorrect, you can return to the voting system and change your choices (or alert a poll worker to a discrepancy if what you see on the screen is not what is on the paper) before viewing a new paper “receipt.—? All of the paper receipts are kept in the locked box which displays them in the event of a recount.

The most interesting feature of this machine was its paper trail. Each vote cast has a unique serial number (which is untraceable to an individual voter, of course), and when you are almost ready to cast your ballot, a paper tally of your votes is displayed in the box next to the machine for you to look at. If it is correct, you cast your votes via the interface. If it is incorrect, you can return to the voting system and change your choices (or alert a poll worker to a discrepancy if what you see on the screen is not what is on the paper) before viewing a new paper “receipt.—? All of the paper receipts are kept in the locked box which displays them in the event of a recount.

This machine is pretty accessible to mobility and visually impaired individuals although those with complete blindness would still require some assistance to cast their ballot.

I am a little disappointed in the range of devices being offered to Connecticut. Perhaps this is a reflection of the poor quality of voting systems available or the poor turnout in the response to the state’s RFP.

+My main concern stems from the fact that our current voting machines in Connecticut were originally designed in the late 1800’s (though they were, I imagine, built in the ’40s or ’50s) and we are still using them. Granted, they are mechanical, and changes to a mechanical device are a little more difficult than software upgrades. Still, it is likely that we will have these electronic machines for many decades to come, so we should have the best machines we can.

+And this doesn’t even begin to address the potential issues with the underlying software incorrectly recording (or failing to record) votes. I really wish that the companies making the software that powers these machines would have to make their source code available to the public so experts in the field could examine and suggest improvements to the algorithms that will (inevitably) power our democracy.

+If you are interested in seeing these machines first-hand and offering your comments to the Secretary of State’s office, please attend one of the remaining exhibitions.

+—–

diff --git a/export/2005-11-18-another-political-divide.md b/export/2005-11-18-another-political-divide.md new file mode 100644 index 0000000..a2ffab3 --- /dev/null +++ b/export/2005-11-18-another-political-divide.md @@ -0,0 +1,14 @@ +--- +title: "Another political divide" +date: 2005-11-18 10:54:04 +comments: false +tags: + - "culture & society" +description: "In the interest of observing politics and activism online, I have signed up to receive numerous newsletters and “action alerts” from groups ranging from MoveOn and the ACLU to the GOP . Politics aside, one thing I find very striking is..." +permalink: /archives/another-political-divide/ +--- + +In the interest of observing politics and activism online, I have signed up to receive numerous newsletters and “action alerts” from groups ranging from MoveOn and the ACLU to the GOP. Politics aside, one thing I find very striking is how much freedom is given to an individual to add a personal message or rewrite a letter in support of or against a particular issue by the “left.” The “right,” however, does not seem to have any interest in their activists’ opinions.

+When I received this recent solicitation from the GOP, for instance, I was not given any opportunity to rewrite the letter in my own words or even add a personal message to the missive. All the GOP seems to want is my signature and the email addresses of my friends. Even if I were to agree with an issue the GOP were asking for my support on, a practice like that makes me very disinclined to take action.

+On the other “side,” you have action requests such as this petition from MoveOn. Much more emphasis is placed on personalizing the message of the action. Even if I do not have the time to add a personal message, I appreciate the effort to include me in the process and am more likely to take action.

+Perhaps it is just my conspiracy-addled mind working overtime, but I find this dichotomy more than a little odd. What does the GOP have to fear from their own activists?

diff --git a/export/2005-11-29-daves-work-draws-a-crowd.md b/export/2005-11-29-daves-work-draws-a-crowd.md new file mode 100644 index 0000000..0e65779 --- /dev/null +++ b/export/2005-11-29-daves-work-draws-a-crowd.md @@ -0,0 +1,18 @@ +--- +title: "Dave’s Work Draws a Crowd" +date: 2005-11-29 20:59:42 +comments: false +tags: + - "business" + - "Flash & ActionScript" + - "projects & products" +description: "I just saw a copy of the latest issue of DMNews and Dave’s hard work garnered the Wadsworth Atheneum a feature story and Cronin and Company some major kudos. Here’s an excerpt from the article:" +permalink: /archives/daves-work-draws-a-crowd/ +--- + +I just saw a copy of the latest issue of DMNews and Dave’s hard work garnered the Wadsworth Atheneum a feature story and Cronin and Company some major kudos. Here’s an excerpt from the article:

+++An online campaign initiated by the 161-year-old museum and developed by Glastonbury, CT, ad agency Cronin and Company Inc. doubled visits to the site at www.wadsworthatheneum.org. Components included a SurrealPainter Web tool, banner ads and the seeding of blogs.

+Central to the campaign is the tool at www.wadsworthatheneum.org/painter. Visitors through Dec. 18 can choose from various colorful backgrounds and objects, then flip, copy, layer or scale them. Once completed, the online artwork can be titled, printed, published and e-mailed to family and friends.

+

Be sure to make your own surrealist painting, while you still can.

diff --git a/export/2005-11-29-savvy-marketers-take-note.md b/export/2005-11-29-savvy-marketers-take-note.md new file mode 100644 index 0000000..9f619ea --- /dev/null +++ b/export/2005-11-29-savvy-marketers-take-note.md @@ -0,0 +1,29 @@ +--- +title: "Savvy Marketers Take Note" +date: 2005-11-29 18:14:07 +comments: false +tags: + - "business" + - "coding" + - "search engine optimization" + - "web standards" +description: "One of the web’s preeminent marketing websites, MarketingSherpa , has just published an article which may start a web standards stampede . The focus is Firefox , but the underlying message is standards, standards and more standards:" +permalink: /archives/savvy-marketers-take-note/ +--- + +One of the web’s preeminent marketing websites, MarketingSherpa, has just published an article which may start a web standards stampede. The focus is Firefox, but the underlying message is standards, standards and more standards:

+++Your more savvy Web designers are likely all agog over Firefox, because its support of Web Standards makes it easier to design and maintain effective Web pages. …

+For marketers, these standards are so darn important because they affect the bottom line: your budget. In fact, The Web Standards Project … estimates that before today’s growing lack of support for standards, the “fractured browser market” was adding at least 25% to the cost of developing Web sites. And that’s just one tiny piece of the revenue picture.

+“Housing construction, electrical wiring, automobile design, all these benefit from design standards,” says Scott McDaniel, MarketingSherpa’s own Internet Director. “Web site construction is maturing in much the same way.”

+

The author, Heidi Anderson, even tallies her “6 Business Benefits of ‘Web Standards-based’ design”:

+It’s nice to see marketers taking note of all we can do for them. Now roll up your shirt sleeves and get to work.

diff --git a/export/2005-12-04-gartner-wants-you.md b/export/2005-12-04-gartner-wants-you.md new file mode 100644 index 0000000..a2d9134 --- /dev/null +++ b/export/2005-12-04-gartner-wants-you.md @@ -0,0 +1,22 @@ +--- +title: "Gartner wants you" +date: 2005-12-04 18:30:33 +comments: false +tags: + - "business" + - "web standards" +description: "My friends at Gartner are hiring a new member for their web team. If you are within commuting distance of Stamford, CT (about 45 minutes outside of NYC by train), I highly recommend considering this job as I know first-hand how awesome..." +permalink: /archives/gartner-wants-you/ +--- + +My friends at Gartner are hiring a new member for their web team. If you are within commuting distance of Stamford, CT (about 45 minutes outside of NYC by train), I highly recommend considering this job as I know first-hand how awesome this team is to work with.

+You’ll need the following areas of knowledge (in addition to a thirst for more):

+If you’re interested, email my friend Aidan (aidan [dot] brewer [at] gartner [dot] com) and let him know I sent you.

diff --git a/export/2005-12-06-karova-redesigns.md b/export/2005-12-06-karova-redesigns.md new file mode 100644 index 0000000..80128dd --- /dev/null +++ b/export/2005-12-06-karova-redesigns.md @@ -0,0 +1,17 @@ +--- +title: "Karova redesigns" +date: 2005-12-06 21:05:22 +comments: false +tags: + - "(x)HTML" + - "business" + - "coding" + - "CSS" + - "design" + - "web standards" +description: "That beautiful bastion of standards-based e-commerce, Karova , has gotten a face lift. Mr. Malarkey deserves many kudos for yet another rich, engaging and playful design. And if you think the sales materials look good , you should see..." +permalink: /archives/karova-redesigns/ +--- + +That beautiful bastion of standards-based e-commerce, Karova, has gotten a face lift. Mr. Malarkey deserves many kudos for yet another rich, engaging and playful design. And if you think the sales materials look good, you should see the store management dashboard. I was offered a sneak peek and couldn’t help but fawn over its sophisticated simplicity. It’s not only usable, but it makes managing a web shop (dare I say it) kinda fun. For a little background on the redesign, read Andy’s writeup.

+Now if only we could find a suitable partner to bring their product to the US (hint, hint).

diff --git a/export/2005-12-19-holiday-greetings-games.md b/export/2005-12-19-holiday-greetings-games.md new file mode 100644 index 0000000..b2fc718 --- /dev/null +++ b/export/2005-12-19-holiday-greetings-games.md @@ -0,0 +1,19 @@ +--- +title: "Holiday Greetings & Games" +date: 2005-12-19 11:20:14 +comments: true +tags: + - "business" + - "projects & products" +description: "This has been one crazy Fall work-wise, so I apologize for the scarcity of posts, but I do have a few holiday treats for you." +permalink: /archives/holiday-greetings-games/ +--- + +This has been one crazy Fall work-wise, so I apologize for the scarcity of posts, but I do have a few holiday treats for you.

+From my day job at Cronin and Company, we’ve got Cronin’s “Grab Bag of Goodness.” As with most internal projects, this was a major rush job. I take no credit for the design (which was handed to me with no wiggle room), but when it comes to the CSS and DOM Scripting, that I’ll proudly take credit for. Use the code “9301″ to get in. Of particular note in this piece:

+alt attribute and with images and CSS off, you’re still golden. As this was a one-off, sIFR seemed like overkill.ul and each item is a li. CSS makes it all display: inline; and then JavaScript keeps reducing the margin-left of the first li by 2px until the absolute value of it’s margin-left is greater than the li’s width. That li is then plucked from the front of the list and appended to the end. Though I am not a big fan of scrolling marquees, this was a pretty fun experiment. inputs, but Safari’s inability to customize certain form controls made me abandon that element in favor of button. It’s a great effect too (IMHO).Then there’s the Easy Designs holiday card. I will spare the commentary on this one with the exception of giving major props to Dave for building the game in a day. I’m pretty darn proud of it, especially since we pretty much went from concept to execution in a matter of days (yeah, procrastination’s a bitch). If you’re interested, you can see a rough approximation of the email that went out (our first Campaign Monitor mailing) or simply play the game.

diff --git a/export/2006-01-09-got-ajax-skills-odeo-beckons.md b/export/2006-01-09-got-ajax-skills-odeo-beckons.md new file mode 100644 index 0000000..0a50769 --- /dev/null +++ b/export/2006-01-09-got-ajax-skills-odeo-beckons.md @@ -0,0 +1,11 @@ +--- +title: "Got AJAX Skills? Odeo beckons" +date: 2006-01-09 15:21:02 +comments: false +tags: + - "business" +description: "The fine folks over at Odeo are looking for an “ AJAX Engineer ” to round out their web dev team. If you live & breathe JavaScript, CSS , XHTML and live in or around San Francisco, drop them a line (jobs [at] odeo [dot] com). Everyone I..." +permalink: /archives/got-ajax-skills-odeo-beckons/ +--- + +The fine folks over at Odeo are looking for an “AJAX Engineer” to round out their web dev team. If you live & breathe JavaScript, CSS, XHTML and live in or around San Francisco, drop them a line (jobs [at] odeo [dot] com). Everyone I know that works there seems to love it, making me wish I lived a little closer to SF.

diff --git a/export/2006-01-09-repetition-and-replacement.md b/export/2006-01-09-repetition-and-replacement.md new file mode 100644 index 0000000..1ace6e4 --- /dev/null +++ b/export/2006-01-09-repetition-and-replacement.md @@ -0,0 +1,17 @@ +--- +title: "Repetition and Replacement" +date: 2006-01-09 15:26:31 +comments: true +tags: + - "(x)HTML" + - "books & articles" + - "coding" + - "CSS" + - "design" + - "web standards" +description: "While working on a new site for a client, I stumbled upon an application of the Leahy / Langridge method of image replacement… to images. As far as I know, it had not been attempted before and, frankly, I was a little amazed it worked." +permalink: /archives/repetition-and-replacement/ +--- + +While working on a new site for a client, I stumbled upon an application of the Leahy/Langridge method of image replacement… to images. As far as I know, it had not been attempted before and, frankly, I was a little amazed it worked.

+The technique, which I am calling iIR for “img Image Replacement” (a bit of a mouthful, I know), helps you keep you code leaner and meaner without sacrificing stylability or accessibility. You can read the article on the Easy Designs and feel free to drop your comments below. Maybe you can think of a better name for it too.

This is perhaps the coolest (albeit experimental) way to browse Flickr: retrievr from System One Labs.

diff --git a/export/2006-01-16-consumer-choice-and-fair-use.md b/export/2006-01-16-consumer-choice-and-fair-use.md new file mode 100644 index 0000000..6c458b0 --- /dev/null +++ b/export/2006-01-16-consumer-choice-and-fair-use.md @@ -0,0 +1,18 @@ +--- +title: "Consumer Choice and Fair Use" +date: 2006-01-16 18:09:51 +comments: false +tags: + - "business" + - "internationalization & localization" +description: "In a recent issue of Game Informer , I read an interesting news piece on the upcoming PS 3 , but its significance goes far beyond that system and even the world of video games. In fact, it applies to all digital media." +permalink: /archives/consumer-choice-and-fair-use/ +--- + +In a recent issue of Game Informer, I read an interesting news piece on the upcoming PS3, but its significance goes far beyond that system and even the world of video games. In fact, it applies to all digital media.

+It seems SCE Australia recently lost a court case involving the use of mod chips to play foreign titles. Current video game systems (and indeed the DVD movie industry) use region encoding to keep certain movies and games out of certain areas, but the court ruled region encoding was “an articficial trade barrier that restricted consumers’ choice.” The ruling impacts only those Aussie gamers who wish to play worldwide games and the descision was obviously made in observance of Australia’s copyright laws, but why did it take a court ruling to make it so? It seems like a no-brainer to me.

+Of course, mod-ing does still void your warranty, but once that’s up, what skin is it off the video game industry’s teeth if you play a foreign game? They still get paid. It’s not like you’re stealing from anyone. The same should go for movies. Why should I not be able to buy a copy of Delicatessen on DVD simply because I live in the US? I bought a copy on Laser Disk back in the day and the DVD is available in Europe. Granted, I’ve got the damn PAL/NTSC thing to worry about, but really, why do we need region encoding? There’s no such thing for CDs and it works out great for everyone. We can listen to music from anywhere and there is very big money in it for record shops that stock import CDs (at least in the US, most imports run upwards of $30 for a full-length CD). Why shouldn’t the same go for movies and video games?

+Alright, so I’ve probably beaten that horse to death now. On to the second interesting little factoid in the article… the one that really scares me: Sony has apparently developed a technology which could be used to stop an individual from playing used games. The technology, developed by PlayStation creator Ken Kutaragi, would encrypt an authentication code on the disk, making it playable only on the first system it is played on. There’s no word on whether this technology will be employed in the PS3, but the sheer fact that something like this has been developed is absurd.

+An argument against this technology could probably be made on the grounds of Fair Use here in the US. After all, if you own two of the same video game systems (Perhaps your parents are divorced and you have an XBox at each parent’s house or you’re really lazy and have one upstairs and one downstairs. I don’t know, work with me here…), why should you not be able to play the game you paid $50 for on both? Based on the Australian ruling and its focus on consumer choice, I imagine the Aussies would probably kill it as well, but did anyone stop to think of the repercussions such a technology would have?

+Such technology has the power to kill the video game rental industry as well as friendly borrowing, both of which I am sure trigger a good portion of new video game sales to begin with. So not only would it crush the aftermarket (used video game sales which, one assumes, is the target), but it runs the risk of killing the market as well. Then there’s the environmental impact. Think about it: millions of games (and their packaging) rendered useless once they’re done being played. That’s as good an idea as those disposable DVDs Disney came up with. And, of course, not everyone can afford to plunk down $40-50 for a brand new game, so it would likely cut the available market considerably. Do people even think about this shit?

+OK, perhaps I’m being just a tad alarmist here. Surely a mod would be available within weeks if not days to disable such “protection,” but I just wish people would think about the consequences of their creations before building them at all.

diff --git a/export/2006-01-18-now-thats-what-i-love-to-hear.md b/export/2006-01-18-now-thats-what-i-love-to-hear.md new file mode 100644 index 0000000..b46baa5 --- /dev/null +++ b/export/2006-01-18-now-thats-what-i-love-to-hear.md @@ -0,0 +1,21 @@ +--- +title: "Now that’s what I love to hear" +date: 2006-01-18 18:07:40 +comments: false +tags: + - "coding" + - "JavaScript" + - "projects & products" +description: "I got an email the other day from Steven Mading, a developer at the BioMagnetic Resonance Bank at the University of Wisconsin . In it, he shared his experience using jsTrace and, with his permission, I’m sharing it with all of you:" +permalink: /archives/now-thats-what-i-love-to-hear/ +--- + +I got an email the other day from Steven Mading, a developer at the BioMagnetic Resonance Bank at the University of Wisconsin. In it, he shared his experience using jsTrace and, with his permission, I’m sharing it with all of you:

+++I just thought I’d give a quick thank you to you for the little jsTrace JavaScript utility you made available online. I found it from a Google search and it was exactly what I needed.

+It really helped me a lot. I had a problem with some widgets on an HTML form that had a lot of JavaScript hooks (things like

+onblur,onclick,onfocus, etc). The events were occurring in a weird order and I couldn’t trace what was happening. Using the standardalert()function was useless because making an alert window POP up caused the events to be different and changed the relevant behavior (sinceonfocusandonblurwere a relevant part of the behavior, popping up a window changes the focus and invalidates the debugging information when what I’m trying to do is figure out why the focus changes aren’t happening the way I expect.)Your jsTrace allowed me to figure out the problem (which, as it turns out, was that when I clicked on Widget B, I was calling BOTH the

+onclickfor Widget B and theonblurfor Widget A, but not always in a predictable order). So once I knew that was happening, I was able to redesign my code to work either way and thus fix the bug.Again, thank you for making this tool publicly available.

+

I love it when things work out like that. It makes it all worthwhile.

+Have you had an experience with using jsTrace that you’d like to share? Do you use it or any other scripts we’ve built often? Are any of the user enhancement scripts in use on production websites? Let us know your thoughts, good or bad.

diff --git a/export/2006-02-02-a-load-of-malarkey.md b/export/2006-02-02-a-load-of-malarkey.md new file mode 100644 index 0000000..c500853 --- /dev/null +++ b/export/2006-02-02-a-load-of-malarkey.md @@ -0,0 +1,20 @@ +--- +title: "A Load of Malarkey" +date: 2006-02-02 00:18:04 +comments: true +tags: + - "browsers" + - "CSS" + - "web standards" +description: "Microsoft released Internet Explorer 7 Beta 2 for public consumption yesterday. Based on everything I’d been reading, the development team seemed to be moving in the right direction . I decided to take it for a test drive to see how..." +permalink: /archives/a-load-of-malarkey/ +--- + +Microsoft released Internet Explorer 7 Beta 2 for public consumption yesterday. Based on everything I’d been reading, the development team seemed to be moving in the right direction. I decided to take it for a test drive to see how things were coming along.

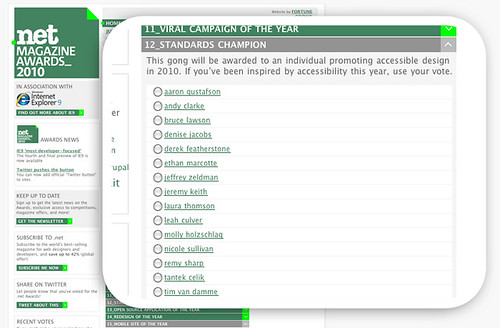

+ +The answer is “not well” I’m afraid. I took my first journey in the new browser over to my friend Andy’s house. I did this mainly because I love how Andy handles IE. It’s beautiful. I wanted to see if IE7 did the right thing and got the real design for And All That Malarkey. As it began rendering, my heart was racing and I was ecstatic to see the beautiful blues and reds of Andy’s blog coming through. Then, something a little odd happened. Somehow IE7 missed the boat and the page was rendered virtually unreadable (except the latest articles section) because a bunch of And All That Malarkey logos kept popping up everywhere.

+Now according to Chris Wilson over at Microsoft (who’s team has been working closely with the wonderful folks at WaSP to make IE7 standards-compliant):

+++Beta 1 makes little progress for web developers in improving our standards support, particularly in our CSS implementation. I feel badly about this, but we have been focused on how to get the most done overall for IE7, so due to our lead time for locking down beta releases and ramping up our team, we could not get a whole lot done in the platform in beta 1. However, I know this will be better in Beta 2

+

At the same time, I know Andy’s a stand-up guy and his CSS is top notch, easily some of the best I’ve seen, this problem’s gotta lie with IE7. I guess you can only expect so much from a beta (even a “beta 2”), but that’s a doosie of an error. At least IE7 was easy to uninstall and I’ve got some commentary to leave on the IE7 Beta 2 feedback form.